Word of the Day: Cognitive Surrender

Will we give up our minds to make nice with AI?

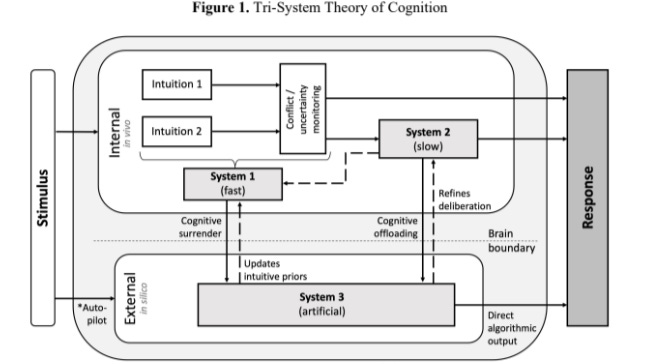

Researchers Steven Shaw and Gideon Nave of the University of Pennsylvania have added an additional reasoning ‘system’ to the Kahneman Fast-and-Slow model. They’ve dubbed this the tri-system theory of cognition:

The new part is at the bottom, System 3, which is, notably, not occurring in the human mind, but within the workings of an artificial intelligence.

As described by Matt Gaskell, this is a step beyond cognitive offloading:

Cognitive offloading is strategic. You delegate a discrete task to a tool. You’re still in control. You’re still thinking. The calculator does the maths so you can focus on the problem. The spreadsheet shows you the numbers so you can think about them.

Cognitive surrender is different. You adopt the AI’s output, without verification, without critical evaluation. The tool doesn’t assist your reasoning – it replaces it. And you might not even notice it’s happened.

Worst of all, cognitive surrender comes with real costs. It turns out that those more likely to trust AI are more susceptible to surrender, and they are more confident of the outcome even when the AI has hallucinated.

Again, Gaskell:

Every time someone on your team pastes a question into ChatGPT and copies the answer into a document without reading it critically, that’s cognitive surrender. Every time a manager asks AI to write a performance review and sends it unedited, that’s cognitive surrender. Every time a strategist asks AI for a recommendation and presents it to the board as their own analysis, that’s cognitive surrender.

And the uncomfortable part is: they’ll feel more confident doing it.

This was the fragment of text where I stumbled upon the concept of cognitive surrender, by Ezra Klein, I Saw Something New in San Francisco:

Researchers have drawn a distinction between “cognitive offloading” and “cognitive surrender.” Cognitive offloading comes when you shift a discrete task over to a tool like a calculator; cognitive surrender comes when, as Steven Shaw and Gideon Mave of the University of Pennsylvania put it, “the user relinquishes cognitive control and adopts the A.I.’s judgment as their own.” In practice, I wonder whether this distinction is so clean: My use of calculators has surely atrophied my math skills, as my use of mapping services has allowed my (already poor) sense of direction to diminish further.

But cognitive surrender is clearly real, and with it will come the atrophy of certain skills and capacities, or the absence of their development in the first place. The work I am doing now, struggling through yet another draft of this essay, is the work that deepens my thinking for later.

Another fun fact I gleaned from Klein.

According to a new NBC News survey, public opinion on A.I. has turned sharply negative; it now polls beneath ICE or Donald Trump (though above the Democratic Party or Iran). There is an A.I. backlash building, and understandably so: Who wants a technology that may take your job and eventually threaten human sovereignty? At the same time, A.I. is everywhere, and being woven into almost everything, and a staggering number of Americans use it daily. Backlash or no, I expect that trend to accelerate, not reverse.

Who wants a technology that untrains your brain?

But we huddle in the shadow of a near-future world where AI is atop the heap, supported by its myrmidons

Under AI, the robot is the true elite and the next layer down are those who adjust its variables, like worshipers tweaking the corpse of a dead god.

| Kyle Chayka, The Revenge of Elitism

And all the rest become NPCs.